John F. Nash Jr.'s Game of "Hex" (1949)

“Oh, by the way, what was it that they were playing?” asked von Neumann. “Nash,” answered Tucker, allowing the corners of his mouth to turn up ever so slightly.

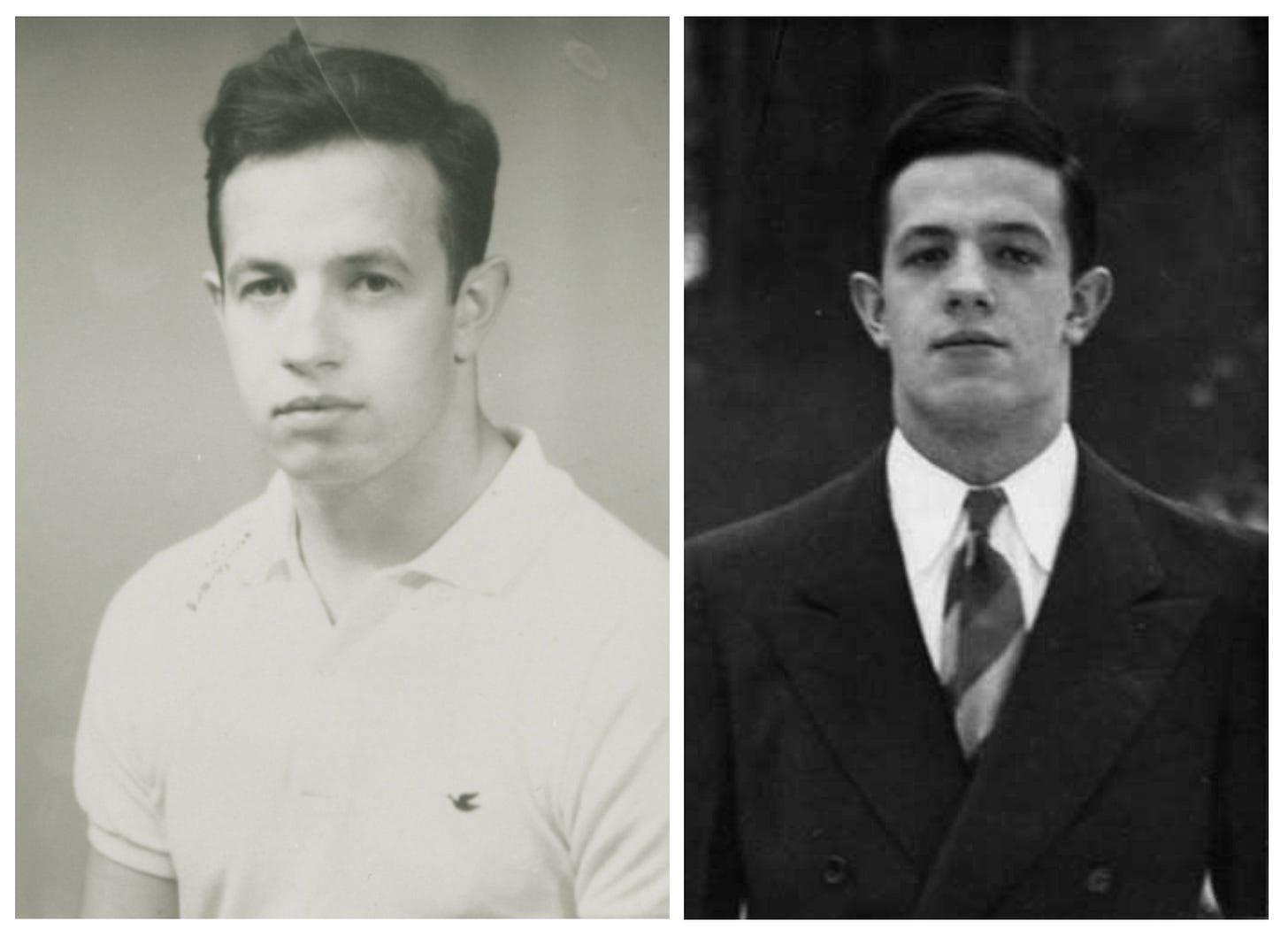

By the late 1940s, the favorite past-time of faculty and graduate students in Fine Hall at Princeton University was board games, including the famous Go and Chess, as well as the less famous Kriegspiel. During this time, ‘beautiful mind’ John Forbes Nash Jr (1928-2015) invented his own game, “Nash”, later marketed by the Parker Bros under the name “Hex”. This essay tells the story of its discovery.

Princeton University (1948–51)

Nash entered graduate school when he was 20 years old, three years after leaving his hometown of Bluefield, West-Virginia. At the time, Princeton’s math department was filled with brilliant minds, lead by Solomon Lefschetz (1884-1972) who jointly with Ralph Fox (1913-73) and Norman Steenrod (1910-71) headed research on topology, first in the country. Emil Artin (1898-1962) lead algebra. Student of Lefschetz, Albert W. Tucker (1905-95) …